磁盘管理――RAID 10

发布时间:2014-09-05 14:02:11作者:知识屋

磁盘管理——RAID 10

一 什么是RAID10

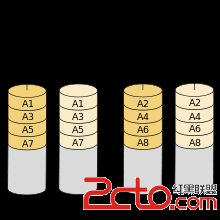

RAID 10/01细分为RAID 1+0或RAID 0+1。

RAID 1+0是先镜射再分割资料,再将所有硬碟分为两组,视为是RAID 0的最低组合,然后将这两组各自视为RAID 1运作。

RAID 0+1则是跟RAID 1+0的程序相反,是先分割再将资料镜射到两组硬碟。它将所有的硬碟分为两组,变成RAID 1的最低组合,而将两组硬碟各自视为RAID 0运作。

效能上,RAID 0+1比RAID 1+0有着更快的读写速度。

可靠性上,当RAID 1+0有一个硬碟受损,其余三个硬碟会继续运作。RAID 0+1 只要有一个硬碟受损,同组RAID 0的另一只硬碟亦会停止运作,只剩下两个硬碟运作,可靠性较低。

因此,RAID 10远较RAID 01常用,零售主机板绝大部份支援RAID 0/1/5/10,但不支援RAID 01。

二 RAID10演示

第一步 对磁盘进行分区

[plain]

#分别对sdb sdc sde sde分区

[root@serv01 ~]# fdisk /dev/sdb

[root@serv01 ~]# fdisk /dev/sdc

[root@serv01 ~]# fdisk /dev/sdd

[root@serv01 ~]# fdisk /dev/sde

[root@serv01 ~]# ls /dev/sd

sda sda1 sda2 sda3 sda4 sda5 sdb sdb1 sdc sdc1 sdd sdd1 sde sde1 sdf sdg

[root@serv01 ~]# mdadm -C /dev/md0 -l 1 -n2 /dev/md0 /dev/md1

mdadm: device /dev/md0 not suitable for anystyle of array

第二步 创建RAID10

[plain]

#创建/dev/md0,RAID1

[root@serv01 ~]# mdadm -C /dev/md0 -l 1 -n2 /dev/sdb1 /dev/sdc1

mdadm: Note: this array has metadata at thestart and

may not be suitable as a boot device. If you plan to

store '/boot' on this device please ensure that

your boot-loader understands md/v1.x metadata, or use

--metadata=0.90

Continue creating array? y

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md0 started.

#创建/dev/md1,RAID1

[root@serv01 ~]# mdadm -C /dev/md1 -l 1 -n2 /dev/sdd1 /dev/sde1

mdadm: Note: this array has metadata at thestart and

may not be suitable as a boot device. If you plan to

store '/boot' on this device please ensure that

your boot-loader understands md/v1.x metadata, or use

--metadata=0.90

Continue creating array? y

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md1 started.

#创建/dev/md10,RAID0

[root@serv01 ~]# mdadm -C /dev/md10 -l 0 -n2 /dev/md0 /dev/md1

mdadm: Defaulting to version 1.2 metadata

mdadm: array /dev/md10 started.

[root@serv01 ~]# cat /proc/mdstat

Personalities : [raid1] [raid0]

md10 : active raid0 md1[1] md0[0]

4188160 blocks super 1.2 512k chunks

md1 : active raid1 sde1[1] sdd1[0]

2095415 blocks super 1.2 [2/2] [UU]

md0 : active raid1 sdc1[1] sdb1[0]

2095415 blocks super 1.2 [2/2] [UU]

unused devices: <none>

[root@serv01 ~]# mkfs.ext4 /dev/md10

mke2fs 1.41.12 (17-May-2010)

Filesystem label=

OS type: Linux

Block size=4096 (log=2)

Fragment size=4096 (log=2)

Stride=128 blocks, Stripe width=256 blocks

262144 inodes, 1047040 blocks

52352 blocks (5.00%) reserved for the superuser

First data block=0

Maximum filesystem blocks=1073741824

32 block groups

32768 blocks per group, 32768 fragments pergroup

8192 inodes per group

Superblock backups stored on blocks:

32768,98304, 163840, 229376, 294912, 819200, 884736

Writing inode tables: done

Creating journal (16384 blocks): done

Writing superblocks and filesystemaccounting information: done

This filesystem will be automaticallychecked every 39 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

[root@serv01 ~]# mkdir /web

[root@serv01 ~]# mount /dev/md10 /web

[root@serv01 ~]# df -h

Filesystem Size Used Avail Use% Mounted on

/dev/sda2 9.7G 1.1G 8.1G 12% /

tmpfs 385M 0 385M 0% /dev/shm

/dev/sda1 194M 25M 160M 14% /boot

/dev/sda5 4.0G 137M 3.7G 4% /opt

/dev/sr0 3.4G 3.4G 0 100% /iso

/dev/md10 4.0G 72M 3.7G 2% /web

[root@serv01 ~]# mdadm --detail --scan

ARRAY /dev/md0 metadata=1.2name=serv01.host.com:0 UUID=78656148:8251f76a:a758c84e:f8927ae0

ARRAY /dev/md1 metadata=1.2name=serv01.host.com:1 UUID=176f932e:a6451cd4:860a9cf7:847b51b7

ARRAY /dev/md10 metadata=1.2name=serv01.host.com:10 UUID=0428d240:4e9d097a:80bfe439:2802ff3e

[root@serv01 ~]# mdadm --detail --scan >/etc/mdadm.conf

[root@serv01 ~]# vim /etc/fstab

[root@serv01 ~]# echo "/dev/md10 /webext4 defaults 1 2" >> /etc/fstab

第三步 重启后发现系统坏掉,很显然RAID10不能正常使用

[plain]

[root@serv01 ~]# reboot

#重启后系统不能正常启动,把添加到fstab中的关于RAID10的那一行删除

#硬RAID才可以实现这个功能,软RAID只能做演示

#注意:默认跟分区是以只读的形式挂载,需要重新挂载

[root@serv01 ~]# mount -o remount,rw /

第四步 固定名字,也不能正常使用

[plain]

[root@serv01 ~]# mdadm --assemble /dev/md10/dev/md0 /dev/md1

mdadm: no correct container type: /dev/md0

mdadm: /dev/md0 has no superblock -assembly aborted

[root@serv01 ~]# mdadm --assemble /dev/md0/dev/sdb1 /dev/sdc1

mdadm: cannot open device /dev/sdb1: Deviceor resource busy

mdadm: /dev/sdb1 has no superblock -assembly aborted

[root@serv01 ~]# mdadm --assemble /dev/md0/dev/sdc1 /dev/sdc1

[root@serv01 ~]# mdadm --manage /dev/md0--stop

mdadm: stopped /dev/md0

[root@serv01 ~]# mdadm --manage /dev/md1--stop

mdadm: stopped /dev/md1

[root@serv01 ~]# mdadm --assemble /dev/md0/dev/sdb1 /dev/sdc1

mdadm: /dev/md0 has been started with 2drives.

[root@serv01 ~]# mdadm --assemble /dev/md1/dev/sdd1 /dev/sde1

mdadm: /dev/md1 has been started with 2drives.

[root@serv01 ~]# mdadm --assemble /dev/md10/dev/md0 /dev/md1

mdadm: /dev/md10 has been started with 2drives.

[root@serv01 ~]# cat /proc/mdstat

Personalities : [raid1] [raid0]

md10 : active raid0 md0[0] md1[1]

4188160 blocks super 1.2 512k chunks

md1 : active raid1 sdd1[0] sde1[1]

2095415 blocks super 1.2 [2/2] [UU]

md0 : active raid1 sdb1[0] sdc1[1]

2095415 blocks super 1.2 [2/2] [UU]

unused devices: <none>

第五步 实验完毕,停掉硬盘,清空磁盘

[plain]

[root@serv01 ~]# mdadm --manage /dev/md0--stop

mdadm: Cannot get exclusive access to/dev/md0:Perhaps a running process, mounted filesystem or active volume group?

[root@serv01 ~]# mdadm --manage /dev/md10--stop

mdadm: stopped /dev/md10

[root@serv01 ~]# mdadm --manage /dev/md0--stop

mdadm: stopped /dev/md0

[root@serv01 ~]# mdadm --manage /dev/md1--stop

mdadm: stopped /dev/md1

[root@serv01 ~]# mdadm --misc --zero-superblock/dev/sdb1

[root@serv01 ~]# mdadm --misc--zero-superblock /dev/sdc1

[root@serv01 ~]# mdadm --misc--zero-superblock /dev/sdd1

[root@serv01 ~]# mdadm --misc--zero-superblock /dev/sde1

[root@serv01 ~]# mdadm -E /dev/sdb1

mdadm: No md superblock detected on/dev/sdb1.

[root@serv01 ~]# mdadm -E /dev/sdd1

mdadm: No md superblock detected on/dev/sdd1.

[root@serv01 ~]# mdadm -E /dev/sdc1

mdadm: No md superblock detected on/dev/sdc1.

[root@serv01 ~]# mdadm -E /dev/sde1

mdadm: No md superblock detected on/dev/sde1.

(免责声明:文章内容如涉及作品内容、版权和其它问题,请及时与我们联系,我们将在第一时间删除内容,文章内容仅供参考)

相关知识

-

linux一键安装web环境全攻略 在linux系统中怎么一键安装web环境方法

-

Linux网络基本网络配置方法介绍 如何配置Linux系统的网络方法

-

Linux下DNS服务器搭建详解 Linux下搭建DNS服务器和配置文件

-

对Linux进行详细的性能监控的方法 Linux 系统性能监控命令详解

-

linux系统root密码忘了怎么办 linux忘记root密码后找回密码的方法

-

Linux基本命令有哪些 Linux系统常用操作命令有哪些

-

Linux必学的网络操作命令 linux网络操作相关命令汇总

-

linux系统从入侵到提权的详细过程 linux入侵提权服务器方法技巧

-

linux系统怎么用命令切换用户登录 Linux切换用户的命令是什么

-

在linux中添加普通新用户登录 如何在Linux中添加一个新的用户

软件推荐

更多 >-

1

专为国人订制!Linux Deepin新版发布

专为国人订制!Linux Deepin新版发布2012-07-10

-

2

CentOS 6.3安装(详细图解教程)

-

3

Linux怎么查看网卡驱动?Linux下查看网卡的驱动程序

-

4

centos修改主机名命令

-

5

Ubuntu或UbuntuKyKin14.04Unity桌面风格与Gnome桌面风格的切换

-

6

FEDORA 17中设置TIGERVNC远程访问

-

7

StartOS 5.0相关介绍,新型的Linux系统!

-

8

解决vSphere Client登录linux版vCenter失败

-

9

LINUX最新提权 Exploits Linux Kernel <= 2.6.37

-

10

nginx在网站中的7层转发功能