linux内存管理之伙伴系统(内存分配)

发布时间:2014-09-05 16:36:49作者:知识屋

伙伴系统分配器大体上分为两类。__get_free_pages()类函数返回分配的第一个页面的线性地址;alloc_pages()类函数返回页面描述符地址。不管以哪种函数进行分配,最终会调用alloc_pages()进行分配页面。

为清楚了解其分配制度,先给个伙伴系统数据的存储框图

也就是每个order对应一个free_area结构,free_area以不同的类型以链表的方式存储这些内存块。

二、主分配函数

下面我们来看这个函数(在UMA模式下)

www.zhishiwu.com

#define alloc_pages(gfp_mask, order) /

alloc_pages_node(numa_node_id(), gfp_mask, order)

www.zhishiwu.com

static inline struct page *alloc_pages_node(int nid, gfp_t gfp_mask,

unsigned int order)

{

/* Unknown node is current node */

if (nid < 0)

nid = numa_node_id();

return __alloc_pages(gfp_mask, order, node_zonelist(nid, gfp_mask));

}

www.zhishiwu.com

static inline struct page *

__alloc_pages(gfp_t gfp_mask, unsigned int order,

struct zonelist *zonelist)

{

return __alloc_pages_nodemask(gfp_mask, order, zonelist, NULL);

}

上层分配函数__alloc_pages_nodemask()

www.zhishiwu.com

/*

* This is the 'heart' of the zoned buddy allocator.

*/

/*上层分配器运用了各种方式进行*/

struct page *

__alloc_pages_nodemask(gfp_t gfp_mask, unsigned int order,

struct zonelist *zonelist, nodemask_t *nodemask)

{

enum zone_type high_zoneidx = gfp_zone(gfp_mask);

struct zone *preferred_zone;

struct page *page;

/* Convert GFP flags to their corresponding migrate type */

int migratetype = allocflags_to_migratetype(gfp_mask);

gfp_mask &= gfp_allowed_mask;

/*调试用*/

lockdep_trace_alloc(gfp_mask);

/*如果__GFP_WAIT标志设置了,需要等待和重新调度*/

might_sleep_if(gfp_mask & __GFP_WAIT);

/*没有设置对应的宏*/

if (should_fail_alloc_page(gfp_mask, order))

return NULL;

/*

* Check the zones suitable for the gfp_mask contain at least one

* valid zone. It's possible to have an empty zonelist as a result

* of GFP_THISNODE and a memoryless node

*/

if (unlikely(!zonelist->_zonerefs->zone))

return NULL;

/* The preferred zone is used for statistics later */

/* 英文注释所说*/

first_zones_zonelist(zonelist, high_zoneidx, nodemask, &preferred_zone);

if (!preferred_zone)

return NULL;

/* First allocation attempt */

/*从pcp和伙伴系统中正常的分配内存空间*/

page = get_page_from_freelist(gfp_mask|__GFP_HARDWALL, nodemask, order,

zonelist, high_zoneidx, ALLOC_WMARK_LOW|ALLOC_CPUSET,

preferred_zone, migratetype);

if (unlikely(!page))/*如果上面没有分配到空间,调用下面函数慢速分配,允许等待和回收*/

page = __alloc_pages_slowpath(gfp_mask, order,

zonelist, high_zoneidx, nodemask,

preferred_zone, migratetype);

/*调试用*/

trace_mm_page_alloc(page, order, gfp_mask, migratetype);

return page;

}

三、从pcp和伙伴系统中正常的分配内存空间

函数get_page_from_freelist()

www.zhishiwu.com

/*

* get_page_from_freelist goes through the zonelist trying to allocate

* a page.

*/

/*为分配制定内存空间,遍历每个zone*/

static struct page *

get_page_from_freelist(gfp_t gfp_mask, nodemask_t *nodemask, unsigned int order,

struct zonelist *zonelist, int high_zoneidx, int alloc_flags,

struct zone *preferred_zone, int migratetype)

{

struct zoneref *z;

struct page *page = NULL;

int classzone_idx;

struct zone *zone;

nodemask_t *allowednodes = NULL;/* zonelist_cache approximation */

int zlc_active = 0; /* set if using zonelist_cache */

int did_zlc_setup = 0; /* just call zlc_setup() one time */

/*zone对应的下标*/

classzone_idx = zone_idx(preferred_zone);

zonelist_scan:

/*

* Scan zonelist, looking for a zone with enough free.

* See also cpuset_zone_allowed() comment in kernel/cpuset.c.

*/

/*遍历每个zone,进行分配*/

for_each_zone_zonelist_nodemask(zone, z, zonelist,

/*在UMA模式下不成立*/ high_zoneidx, nodemask) {

if (NUMA_BUILD && zlc_active &&

!zlc_zone_worth_trying(zonelist, z, allowednodes))

continue;

if ((alloc_flags & ALLOC_CPUSET) &&

!cpuset_zone_allowed_softwall(zone, gfp_mask))

goto try_next_zone;

BUILD_BUG_ON(ALLOC_NO_WATERMARKS < NR_WMARK);

/*需要关注水位*/

if (!(alloc_flags & ALLOC_NO_WATERMARKS)) {

unsigned long mark;

int ret;

/*从flags中取的mark*/

mark = zone->watermark[alloc_flags & ALLOC_WMARK_MASK];

/*如果水位正常,从本zone中分配*/

if (zone_watermark_ok(zone, order, mark,

classzone_idx, alloc_flags))

goto try_this_zone;

if (zone_reclaim_mode == 0)/*如果上面检查的水位低于正常值,且没有设置页面回收值*/

goto this_zone_full;

/*在UMA模式下下面函数直接返回0*/

ret = zone_reclaim(zone, gfp_mask, order);

switch (ret) {

case ZONE_RECLAIM_NOSCAN:

/* did not scan */

goto try_next_zone;

case ZONE_RECLAIM_FULL:

/* scanned but unreclaimable */

goto this_zone_full;

default:

/* did we reclaim enough */

if (!zone_watermark_ok(zone, order, mark,

classzone_idx, alloc_flags))

goto this_zone_full;

}

}

try_this_zone:/*本zone正常水位*/

/*先从pcp中分配,然后不行的话再从伙伴系统中分配*/

page = buffered_rmqueue(preferred_zone, zone, order,

gfp_mask, migratetype);

if (page)

break;

this_zone_full:

if (NUMA_BUILD)/*UMA模式为0*/

zlc_mark_zone_full(zonelist, z);

try_next_zone:

if (NUMA_BUILD && !did_zlc_setup && nr_online_nodes > 1) {

/*

* we do zlc_setup after the first zone is tried but only

* if there are multiple nodes make it worthwhile

*/

allowednodes = zlc_setup(zonelist, alloc_flags);

zlc_active = 1;

did_zlc_setup = 1;

}

}

if (unlikely(NUMA_BUILD && page == NULL && zlc_active)) {

/* Disable zlc cache for second zonelist scan */

zlc_active = 0;

goto zonelist_scan;

}

return page;/*返回页面*/

}

主分配函数

www.zhishiwu.com

/*

* Really, prep_compound_page() should be called from __rmqueue_bulk(). But

* we cheat by calling it from here, in the order > 0 path. Saves a branch

* or two.

*/

/*先考虑从pcp中分配空间,当order大于0时再考虑从伙伴系统中分配*/

static inline

struct page *buffered_rmqueue(struct zone *preferred_zone,

struct zone *zone, int order, gfp_t gfp_flags,

int migratetype)

{

unsigned long flags;

struct page *page;

int cold = !!(gfp_flags & __GFP_COLD);/*如果分配参数指定了__GFP_COLD标志,则设置cold标志*/

int cpu;

again:

cpu = get_cpu();

if (likely(order == 0)) {/*分配一个页面时,使用pcp*/

struct per_cpu_pages *pcp;

struct list_head *list;

/*找到zone对应的pcp*/

pcp = &zone_pcp(zone, cpu)->pcp;

list = &pcp->lists[migratetype];/*pcp中对应类型的list*/

/* 这里需要关中断,因为内存回收过程可能发送核间中断,强制每个核从每CPU

缓存中释放页面。而且中断处理函数也会分配单页。*/

local_irq_save(flags);

if (list_empty(list)) {/*如果pcp中没有页面,需要补充*/

/*从伙伴系统中获得batch个页面

batch为一次分配的页面数*/

pcp->count += rmqueue_bulk(zone, 0,

pcp->batch, list,

migratetype, cold);

/*如果链表仍然为空,申请失败返回*/

if (unlikely(list_empty(list)))

goto failed;

}

/* 如果分配的页面不需要考虑硬件缓存(注意不是每CPU页面缓存)

,则取出链表的最后一个节点返回给上层*/

if (cold)

page = list_entry(list->prev, struct page, lru);

else/* 如果要考虑硬件缓存,则取出链表的第一个页面,这个页面是最近刚释放到每CPU

缓存的,缓存热度更高*/

page = list_entry(list->next, struct page, lru);

list_del(&page->lru);/*从pcp中脱离*/

pcp->count--;/*pcp计数减一*/

}

else {/*当order为大于1时,不从pcp中分配,直接考虑从伙伴系统中分配*/

if (unlikely(gfp_flags & __GFP_NOFAIL)) {

/*

* __GFP_NOFAIL is not to be used in new code.

*

* All __GFP_NOFAIL callers should be fixed so that they

* properly detect and handle allocation failures.

*

* We most definitely don't want callers attempting to

* allocate greater than order-1 page units with

* __GFP_NOFAIL.

*/

WARN_ON_ONCE(order > 1);

}

/* 关中断,并获得管理区的锁*/

spin_lock_irqsave(&zone->lock, flags);

/*从伙伴系统中相应类型的相应链表中分配空间*/

page = __rmqueue(zone, order, migratetype);

/* 已经分配了1 << order个页面,这里进行管理区空闲页面统计计数*/

__mod_zone_page_state(zone, NR_FREE_PAGES, -(1 << order));

spin_unlock(&zone->lock);/* 这里仅仅打开自旋锁,待后面统计计数设置完毕后再开中断*/

if (!page)

goto failed;

}

/*事件统计计数,调试*/

__count_zone_vm_events(PGALLOC, zone, 1 << order);

zone_statistics(preferred_zone, zone);

local_irq_restore(flags);/*恢复中断*/

put_cpu();

VM_BUG_ON(bad_range(zone, page));

/* 这里进行安全性检查,并进行一些善后工作。

如果页面标志破坏,返回的页面出现了问题,则返回试图分配其他页面*/

if (prep_new_page(page, order, gfp_flags))

goto again;

return page;

failed:

local_irq_restore(flags);

put_cpu();

return NULL;

}

3.1 pcp缓存补充

从伙伴系统中获得batch个页面,batch为一次分配的页面数rmqueue_bulk()函数。

www.zhishiwu.com

/*

* Obtain a specified number of elements from the buddy allocator, all under

* a single hold of the lock, for efficiency. Add them to the supplied list.

* Returns the number of new pages which were placed at *list.

*/

/*该函数返回的是1<<order个页面,但是在pcp

处理中调用,其他地方没看到,order为0

也就是说返回的是页面数,加入的链表为

对应调用pcp的链表*/

static int rmqueue_bulk(struct zone *zone, unsigned int order,

unsigned long count, struct list_head *list,

int migratetype, int cold)

{

int i;

spin_lock(&zone->lock);/* 上层函数已经关了中断,这里需要操作管理区,获取管理区的自旋锁*/

for (i = 0; i < count; ++i) {/* 重复指定的次数,从伙伴系统中分配页面*/

/* 从伙伴系统中取出页面*/

struct page *page = __rmqueue(zone, order, migratetype);

if (unlikely(page == NULL))/*分配失败*/

break;

/*

* Split buddy pages returned by expand() are received here

* in physical page order. The page is added to the callers and

* list and the list head then moves forward. From the callers

* perspective, the linked list is ordered by page number in

* some conditions. This is useful for IO devices that can

* merge IO requests if the physical pages are ordered

* properly.

*/

if (likely(cold == 0))/*根据调用者的要求,将页面放到每CPU缓存链表的头部或者尾部*/

list_add(&page->lru, list);

else

list_add_tail(&page->lru, list);

set_page_private(page, migratetype);/*设置private属性为页面的迁移类型*/

list = &page->lru;

}

/*递减管理区的空闲页面计数*/

__mod_zone_page_state(zone, NR_FREE_PAGES, -(i << order));

spin_unlock(&zone->lock);/*释放管理区的子璇锁*/

return i;

}

3.2 从伙伴系统中取出页面

__rmqueue()函数

www.zhishiwu.com

/*

* Do the hard work of removing an element from the buddy allocator.

* Call me with the zone->lock already held.

*/

/*采用两种范式试着分配order个page*/

static struct page *__rmqueue(struct zone *zone, unsigned int order,

int migratetype)

{

struct page *page;

retry_reserve:

/*从指定order开始从小到达遍历,优先从指定的迁移类型链表中分配页面*/

page = __rmqueue_smallest(zone, order, migratetype);

/*

* 如果满足以下两个条件,就从备用链表中分配页面:

* 快速流程没有分配到页面,需要从备用迁移链表中分配.

* 当前不是从保留的链表中分配.因为保留的链表是最后可用的链表,

* 不能从该链表分配的话,说明本管理区真的没有可用内存了.

*/

if (unlikely(!page) && migratetype != MIGRATE_RESERVE) {

/*order从大到小遍历,从备用链表中分配页面*/

page = __rmqueue_fallback(zone, order, migratetype);

/*

* Use MIGRATE_RESERVE rather than fail an allocation. goto

* is used because __rmqueue_smallest is an inline function

* and we want just one call site

*/

if (!page) {/* 备用链表中没有分配到页面,从保留链表中分配页面了*/

migratetype = MIGRATE_RESERVE;

goto retry_reserve;/* 跳转到retry_reserve,从保留的链表中分配页面*/

}

}

/*调试代码*/

trace_mm_page_alloc_zone_locked(page, order, migratetype);

return page;

}

3.2.1 从指定的迁移类型链表中分配页面

从指定order开始从小到达遍历,优先从指定的迁移类型链表中分配页面__rmqueue_smallest(zone, order, migratetype);

www.zhishiwu.com

/*

* Go through the free lists for the given migratetype and remove

* the smallest available page from the freelists

*/

/*从给定的order开始,从小到大遍历;

找到后返回页面基址,合并分割后的空间*/

static inline

struct page *__rmqueue_smallest(struct zone *zone, unsigned int order,

int migratetype)

{

unsigned int current_order;

struct free_area * area;

struct page *page;

/* Find a page of the appropriate size in the preferred list */

for (current_order = order; current_order < MAX_ORDER; ++current_order) {

area = &(zone->free_area[current_order]);/*得到指定order的area*/

/*如果area指定类型的伙伴系统链表为空*/

if (list_empty(&area->free_list[migratetype]))

continue;/*查找下一个order*/

/*对应的链表不空,得到链表中数据*/

page = list_entry(area->free_list[migratetype].next,

struct page, lru);

list_del(&page->lru);/*从伙伴系统中删除;*/

rmv_page_order(page);/*移除page中order的变量*/

area->nr_free--;/*空闲块数减一*/

/*拆分、合并*/

expand(zone, page, order, current_order, area, migratetype);

return page;

}

return NULL;

}

伙伴系统内存块拆分和合并

看一个辅助函数,用于伙伴系统中内存块的拆分、合并

www.zhishiwu.com

/*

* The order of subdivision here is critical for the IO subsystem.

* Please do not alter this order without good reasons and regression

* testing. Specifically, as large blocks of memory are subdivided,

* the order in which smaller blocks are delivered depends on the order

* they're subdivided in this function. This is the primary factor

* influencing the order in which pages are delivered to the IO

* subsystem according to empirical testing, and this is also justified

* by considering the behavior of a buddy system containing a single

* large block of memory acted on by a series of small allocations.

* This behavior is a critical factor in sglist merging's success.

*

* -- wli

*/

/*此函数主要用于下面这种情况:

分配函数从high中分割出去了low大小的内存;

然后要将high留下的内存块合并放到伙伴系统中;*/

static inline void expand(struct zone *zone, struct page *page,

int low, int high, struct free_area *area,

int migratetype)

{

unsigned long size = 1 << high;

while (high > low) {/*因为去掉了low的大小,所以最后肯定剩下的

是low的大小(2的指数运算)*/

area--;/*减一到order减一的area*/

high--;/*order减一*/

size >>= 1;/*大小除以2*/

VM_BUG_ON(bad_range(zone, &page[size]));

/*加到指定的伙伴系统中*/

list_add(&page[size].lru, &area->free_list[migratetype]);

area->nr_free++;/*空闲块加一*/

set_page_order(&page[size], high);/*设置相关order*/

}

}

3.2.2 从备用链表中分配页面

www.zhishiwu.com

/* Remove an element from the buddy allocator from the fallback list */

static inline struct page *

__rmqueue_fallback(struct zone *zone, int order, int start_migratetype)

{

struct free_area * area;

int current_order;

struct page *page;

int migratetype, i;

/* Find the largest possible block of pages in the other list */

/* 从最高阶搜索,这样可以尽量的将其他迁移列表中的大块分割,避免形成过多的碎片*/

for (current_order = MAX_ORDER-1; current_order >= order;

--current_order) {

for (i = 0; i < MIGRATE_TYPES - 1; i++) {

/*回调到下一个migratetype*/

migratetype = fallbacks[start_migratetype][i];

/* MIGRATE_RESERVE handled later if necessary */

/* 本函数不处理MIGRATE_RESERVE类型的迁移链表,如果本函数返回NULL,

则上层函数直接从MIGRATE_RESERVE中分配*/

if (migratetype == MIGRATE_RESERVE)

continue;/*访问下一个类型*/

area = &(zone->free_area[current_order]);

/*如果指定order和类型的链表为空*/

if (list_empty(&area->free_list[migratetype]))

continue;/*访问下一个类型*/

/*得到指定类型和order的页面基址*/

page = list_entry(area->free_list[migratetype].next,

struct page, lru);

area->nr_free--;/*空闲块数减一*/

/*

* If breaking a large block of pages, move all free

* pages to the preferred allocation list. If falling

* back for a reclaimable kernel allocation, be more

* agressive about taking ownership of free pages

*/

if (unlikely(current_order >= (pageblock_order >> 1)) ||/* 要分割的页面是一个大页面,则将整个页面全部迁移到当前迁移类型的链表中,

这样可以避免过多的碎片*/

start_migratetype == MIGRATE_RECLAIMABLE ||/* 目前分配的是可回收页面,这类页面有突发的特点,将页面全部迁移到可回收链表中,

可以避免将其他迁移链表分割成太多的碎片*/

page_group_by_mobility_disabled) {/* 指定了迁移策略,总是将被分割的页面迁移*/

unsigned long pages;

/*移动到先前类型的伙伴系统中*/

pages = move_freepages_block(zone, page,

start_migratetype);

/* Claim the whole block if over half of it is free */

/* pages是移动的页面数,如果可移动的页面数量较多,

则将整个大内存块的迁移类型修改*/

if (pages >= (1 << (pageblock_order-1)) ||

page_group_by_mobility_disabled)

/*设置页面标示*/

set_pageblock_migratetype(page,

start_migratetype);

migratetype = start_migratetype;

}

/* Remove the page from the freelists */

list_del(&page->lru);

rmv_page_order(page);

/* Take ownership for orders >= pageblock_order */

if (current_order >= pageblock_order)//大于pageblock_order的部分设置相应标示

/*这个不太可能,因为pageblock_order为10*/

change_pageblock_range(page, current_order,

start_migratetype);

/*拆分和合并*/

expand(zone, page, order, current_order, area, migratetype);

trace_mm_page_alloc_extfrag(page, order, current_order,

start_migratetype, migratetype);

return page;

}

}

return NULL;

}

备用链表

www.zhishiwu.com

/*

* This array describes the order lists are fallen back to when

* the free lists for the desirable migrate type are depleted

*/

/*指定类型的链表为空时,这个数组规定

回调的到那个类型的链表*/

static int fallbacks[MIGRATE_TYPES][MIGRATE_TYPES-1] = {

[MIGRATE_UNMOVABLE] = { MIGRATE_RECLAIMABLE, MIGRATE_MOVABLE, MIGRATE_RESERVE },

[MIGRATE_RECLAIMABLE] = { MIGRATE_UNMOVABLE, MIGRATE_MOVABLE, MIGRATE_RESERVE },

[MIGRATE_MOVABLE] = { MIGRATE_RECLAIMABLE, MIGRATE_UNMOVABLE, MIGRATE_RESERVE },

[MIGRATE_RESERVE] = { MIGRATE_RESERVE, MIGRATE_RESERVE, MIGRATE_RESERVE }, /* Never used */

};

移动到指定类型的伙伴系统中

www.zhishiwu.com

/*将指定区域段的页面移动到指定类型的

伙伴系统中,其实就是将页面的类型做了

更改,但是是采用移动的方式

功能和上面函数类似,但是要求以

页面块方式对其*/

static int move_freepages_block(struct zone *zone, struct page *page,

int migratetype)

{

unsigned long start_pfn, end_pfn;

struct page *start_page, *end_page;

/*如下是对齐操作,其中变量pageblock_nr_pages为MAX_ORDER-1*/

start_pfn = page_to_pfn(page);

start_pfn = start_pfn & ~(pageblock_nr_pages-1);

start_page = pfn_to_page(start_pfn);

end_page = start_page + pageblock_nr_pages - 1;

end_pfn = start_pfn + pageblock_nr_pages - 1;

/* Do not cross zone boundaries */

if (start_pfn < zone->zone_start_pfn)

start_page = page;

/*结束边界检查*/

if (end_pfn >= zone->zone_start_pfn + zone->spanned_pages)

return 0;

/*调用上面函数*/

return move_freepages(zone, start_page, end_page, migratetype);

}

www.zhishiwu.com

/*

* Move the free pages in a range to the free lists of the requested type.

* Note that start_page and end_pages are not aligned on a pageblock

* boundary. If alignment is required, use move_freepages_block()

*/

/*将指定区域段的页面移动到指定类型的

伙伴系统中,其实就是将页面的类型做了 更改,但是是采用移动的方式*/

static int move_freepages(struct zone *zone,

struct page *start_page, struct page *end_page,

int migratetype)

{

struct page *page;

unsigned long order;

int pages_moved = 0;

#ifndef CONFIG_HOLES_IN_ZONE

/*

* page_zone is not safe to call in this context when

* CONFIG_HOLES_IN_ZONE is set. This bug check is probably redundant

* anyway as we check zone boundaries in move_freepages_block().

* Remove at a later date when no bug reports exist related to

* grouping pages by mobility

*/

BUG_ON(page_zone(start_page) != page_zone(end_page));

#endif

for (page = start_page; page <= end_page;) {

/* Make sure we are not inadvertently changing nodes */

VM_BUG_ON(page_to_nid(page) != zone_to_nid(zone));

if (!pfn_valid_within(page_to_pfn(page))) {

page++;

continue;

}

if (!PageBuddy(page)) {

page++;

continue;

}

order = page_order(page);

list_del(&page->lru);/*将页面块从原来的伙伴系统链表*/

/*中删除,注意,这里不是一个页面

*而是以该页面的伙伴块*/

list_add(&page->lru,/*添加到指定order和类型下的伙伴系统链表*/

&zone->free_area[order].free_list[migratetype]);

page += 1 << order;/*移动页面数往上定位*/

pages_moved += 1 << order;/*移动的页面数*/

}

return pages_moved;

}

四、慢速分配,允许等待和回收

www.zhishiwu.com

/**

* 当无法快速分配页面时,如果调用者允许等待

,则通过本函数进行慢速分配。

* 此时允许进行内存回收。

*/

static inline struct page *

__alloc_pages_slowpath(gfp_t gfp_mask, unsigned int order,

struct zonelist *zonelist, enum zone_type high_zoneidx,

nodemask_t *nodemask, struct zone *preferred_zone,

int migratetype)

{

const gfp_t wait = gfp_mask & __GFP_WAIT;

struct page *page = NULL;

int alloc_flags;

unsigned long pages_reclaimed = 0;

unsigned long did_some_progress;

struct task_struct *p = current;

/*

* In the slowpath, we sanity check order to avoid ever trying to

* reclaim >= MAX_ORDER areas which will never succeed. Callers may

* be using allocators in order of preference for an area that is

* too large.

*//*参数合法性检查*/

if (order >= MAX_ORDER) {

WARN_ON_ONCE(!(gfp_mask & __GFP_NOWARN));

return NULL;

}

/*

* GFP_THISNODE (meaning __GFP_THISNODE, __GFP_NORETRY and

* __GFP_NOWARN set) should not cause reclaim since the subsystem

* (f.e. slab) using GFP_THISNODE may choose to trigger reclaim

* using a larger set of nodes after it has established that the

* allowed per node queues are empty and that nodes are

* over allocated.

*/

/**

* 调用者指定了GFP_THISNODE标志,表示不能进行内存回收。

* 上层调用者应当在指定了GFP_THISNODE失败后,使用其他标志进行分配。

*/

if (NUMA_BUILD && (gfp_mask & GFP_THISNODE) == GFP_THISNODE)

goto nopage;

restart:/*如果调用者没有禁止kswapd,则唤醒该线程进行内存回收。*/

wake_all_kswapd(order, zonelist, high_zoneidx);

/*

* OK, we're below the kswapd watermark and have kicked background

* reclaim. Now things get more complex, so set up alloc_flags according

* to how we want to proceed.

*/

/*根据分配标志确定内部标志,主要是用于水线*/

alloc_flags = gfp_to_alloc_flags(gfp_mask);

/**

* 与快速分配流程相比,这里的分配标志使用了低的水线。

* 在进行内存回收操作前,我们使用低水线再尝试分配一下。

* 当然,不管是否允许ALLOC_NO_WATERMARKS标志,我们都将它清除。

*/

/* This is the last chance, in general, before the goto nopage. */

page = get_page_from_freelist(gfp_mask, nodemask, order, zonelist,

high_zoneidx, alloc_flags & ~ALLOC_NO_WATERMARKS,

preferred_zone, migratetype);

if (page)/*分配成功,找到页面*/

goto got_pg;

rebalance:

/* Allocate without watermarks if the context allows */

/* 某些上下文,如内存回收进程及被杀死的任务,都允许它完全突破水线的限制分配内存。*/

if (alloc_flags & ALLOC_NO_WATERMARKS) {

page = __alloc_pages_high_priority(gfp_mask, order,

zonelist, high_zoneidx, nodemask,

preferred_zone, migratetype);

if (page))/* 在不考虑水线的情况下,分配到了内存*/

goto got_pg;

}

/* Atomic allocations - we can't balance anything */

/* 调用者希望原子分配内存,此时不能等待内存回收,返回NULL */

if (!wait)

goto nopage;

/* Avoid recursion of direct reclaim */

/* 调用者本身就是内存回收进程,不能进入后面的内存回收处理流程,否则死锁*/

if (p->flags & PF_MEMALLOC)

goto nopage;

/* Avoid allocations with no watermarks from looping endlessly */

/**

* 当前线程正在被杀死,它可以完全突破水线分配内存。这里向上层返回NULL,是为了避免系统进入死循环。

* 当然,如果上层调用不允许失败,则死循环继续分配,等待其他线程释放一点点内存。

*/

if (test_thread_flag(TIF_MEMDIE) && !(gfp_mask & __GFP_NOFAIL))

goto nopage;

/* Try direct reclaim and then allocating */

/**

* 直接在内存分配上下文中进行内存回收操作。

*/

page = __alloc_pages_direct_reclaim(gfp_mask, order,

zonelist, high_zoneidx,

nodemask,

alloc_flags, preferred_zone,

migratetype, &did_some_progress);

if (page))/* 庆幸,回收了一些内存后,满足了上层分配需求*/

goto got_pg;

/*

* If we failed to make any progress reclaiming, then we are

* running out of options and have to consider going OOM

*/

/* 内存回收过程没有回收到内存,系统真的内存不足了*/

if (!did_some_progress) {

/**

* 调用者不是文件系统的代码,允许进行文件系统操作,并且允许重试。

* 这里需要__GFP_FS标志可能是进入OOM流程后会杀进程或进入panic,需要文件操作。

*/

if ((gfp_mask & __GFP_FS) && !(gfp_mask & __GFP_NORETRY)) {

if (oom_killer_disabled)/* 系统禁止了OOM,向上层返回NULL */

goto nopage;

/**

* 杀死其他进程后再尝试分配内存

*/

page = __alloc_pages_may_oom(gfp_mask, order,

zonelist, high_zoneidx,

nodemask, preferred_zone,

migratetype);

if (page)

goto got_pg;

/*

* The OOM killer does not trigger for high-order

* ~__GFP_NOFAIL allocations so if no progress is being

* made, there are no other options and retrying is

* unlikely to help.

*/)/* 要求的页面数量较多,再试意义不大*/

if (order > PAGE_ALLOC_COSTLY_ORDER &&

!(gfp_mask & __GFP_NOFAIL))

goto nopage;

goto restart;

}

}

/* Check if we should retry the allocation */

/* 内存回收过程回收了一些内存,接下来判断是否有必要继续重试*/

pages_reclaimed += did_some_progress;

if (should_alloc_retry(gfp_mask, order, pages_reclaimed)) {

/* Wait for some write requests to complete then retry */

congestion_wait(BLK_RW_ASYNC, HZ/50);

goto rebalance;

}

nopage:

/* 内存分配失败了,打印内存分配失败的警告*/

if (!(gfp_mask & __GFP_NOWARN) && printk_ratelimit()) {

printk(KERN_WARNING "%s: page allocation failure."

" order:%d, mode:0x%x/n",

p->comm, order, gfp_mask);

dump_stack();

show_mem();

}

return page;

got_pg:

/* 运行到这里,说明成功分配了内存,这里进行内存检测调试*/

if (kmemcheck_enabled)

kmemcheck_pagealloc_alloc(page, order, gfp_mask);

return page;

}

总结:Linux伙伴系统主要分配流程为

正常非配(或叫快速分配)流程:

1,如果分配的是单个页面,考虑从per CPU缓存中分配空间,如果缓存中没有页面,从伙伴系统中提取页面做补充。

2,分配多个页面时,从指定类型中分配,如果指定类型中没有足够的页面,从备用类型链表中分配。最后会试探保留类型链表。

慢速(允许等待和页面回收)分配:

3,当上面两种分配方案都不能满足要求时,考虑页面回收、杀死进程等操作后在试

(免责声明:文章内容如涉及作品内容、版权和其它问题,请及时与我们联系,我们将在第一时间删除内容,文章内容仅供参考)

相关知识

-

linux一键安装web环境全攻略 在linux系统中怎么一键安装web环境方法

-

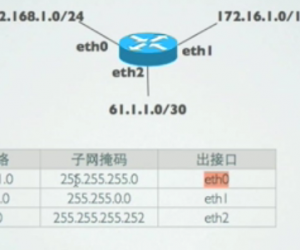

Linux网络基本网络配置方法介绍 如何配置Linux系统的网络方法

-

Linux下DNS服务器搭建详解 Linux下搭建DNS服务器和配置文件

-

对Linux进行详细的性能监控的方法 Linux 系统性能监控命令详解

-

linux系统root密码忘了怎么办 linux忘记root密码后找回密码的方法

-

Linux基本命令有哪些 Linux系统常用操作命令有哪些

-

Linux必学的网络操作命令 linux网络操作相关命令汇总

-

linux系统从入侵到提权的详细过程 linux入侵提权服务器方法技巧

-

linux系统怎么用命令切换用户登录 Linux切换用户的命令是什么

-

在linux中添加普通新用户登录 如何在Linux中添加一个新的用户

软件推荐

更多 >-

1

专为国人订制!Linux Deepin新版发布

专为国人订制!Linux Deepin新版发布2012-07-10

-

2

CentOS 6.3安装(详细图解教程)

-

3

Linux怎么查看网卡驱动?Linux下查看网卡的驱动程序

-

4

centos修改主机名命令

-

5

Ubuntu或UbuntuKyKin14.04Unity桌面风格与Gnome桌面风格的切换

-

6

FEDORA 17中设置TIGERVNC远程访问

-

7

StartOS 5.0相关介绍,新型的Linux系统!

-

8

解决vSphere Client登录linux版vCenter失败

-

9

LINUX最新提权 Exploits Linux Kernel <= 2.6.37

-

10

nginx在网站中的7层转发功能