Linux内存管理之slab机制(初始化)

发布时间:2014-09-05 16:36:53作者:知识屋

start_kernel()->mm_init()->kmem_cache_init()

执行流程:

1,初始化静态initkmem_list3三链;

2,初始化cache_cache的nodelists字段为1中的三链;

3,根据内存情况初始化每个slab占用的页面数变量slab_break_gfp_order;

4,将cache_cache加入cache_chain链表中,初始化cache_cache;

5,创建kmalloc所用的general cache:

1)cache的名称和大小存放在两个数据结构对应的数组中,对应大小的cache可以从size数组中找到;

2)先创建INDEX_AC和INDEX_L3下标的cache;

3)循环创建size数组中各个大小的cache;

6,替换静态本地cache全局变量:

1) 替换cache_cache中的arry_cache,本来指向静态变量initarray_cache.cache;

2) 替换malloc_sizes[INDEX_AC].cs_cachep的local cache,原本指向静态变量initarray_generic.cache;

7,替换静态三链

1)替换cache_cache三链,原本指向静态变量initkmem_list3;

2)替换malloc_sizes[INDEX_AC].cs_cachep三链,原本指向静态变量initkmem_list3;

8,更新初始化进度

www.zhishiwu.com

/*

* Initialisation. Called after the page allocator have been initialised and

* before smp_init().

*/

void __init kmem_cache_init(void)

{

size_t left_over;

struct cache_sizes *sizes;

struct cache_names *names;

int i;

int order;

int node;

/* 在slab初始化好之前,无法通过kmalloc分配初始化过程中必要的一些对象

,只能使用静态的全局变量

,待slab初始化后期,再使用kmalloc动态分配的对象替换全局变量*/

/* 如前所述,先借用全局变量initkmem_list3表示的slab三链

,每个内存节点对应一组slab三链。initkmem_list3是个slab三链数组,对于每个内存节点,包含三组

:struct kmem_cache的slab三链、struct arraycache_init的slab 三链、struct kmem_list3的slab三链

。这里循环初始化所有内存节点的所有slab三链*/

if (num_possible_nodes() == 1)

use_alien_caches = 0;

/*初始化所有node的所有slab中的三个链表*/

for (i = 0; i < NUM_INIT_LISTS; i++) {

kmem_list3_init(&initkmem_list3[i]);

/* 全局变量cache_cache指向的slab cache包含所有struct kmem_cache对象,不包含cache_cache本身

。这里初始化所有内存节点的struct kmem_cache的slab三链为空。*/

if (i < MAX_NUMNODES)

cache_cache.nodelists[i] = NULL;

}

/* 设置struct kmem_cache的slab三链指向initkmem_list3中的一组slab三链,

CACHE_CACHE为cache在内核cache链表中的索引,

struct kmem_cache对应的cache是内核中创建的第一个cache

,故CACHE_CACHE为0 */

set_up_list3s(&cache_cache, CACHE_CACHE);

/*

* Fragmentation resistance on low memory - only use bigger

* page orders on machines with more than 32MB of memory.

*/

/* 全局变量slab_break_gfp_order为每个slab最多占用几个页面

,用来抑制碎片,比如大小为3360的对象

,如果其slab只占一个页面,碎片为736

,slab占用两个页面,则碎片大小也翻倍

。只有当对象很大

,以至于slab中连一个对象都放不下时

,才可以超过这个值

。有两个可能的取值

:当可用内存大于32MB时

,BREAK_GFP_ORDER_HI为1

,即每个slab最多占用2个页面

,只有当对象大小大于8192时

,才可以突破slab_break_gfp_order的限制

。小于等于32MB时BREAK_GFP_ORDER_LO为0。*/

if (totalram_pages > (32 << 20) >> PAGE_SHIFT)

slab_break_gfp_order = BREAK_GFP_ORDER_HI;

/* Bootstrap is tricky, because several objects are allocated

* from caches that do not exist yet:

* 1) initialize the cache_cache cache: it contains the struct

* kmem_cache structures of all caches, except cache_cache itself:

* cache_cache is statically allocated.

* Initially an __init data area is used for the head array and the

* kmem_list3 structures, it's replaced with a kmalloc allocated

* array at the end of the bootstrap.

* 2) Create the first kmalloc cache.

* The struct kmem_cache for the new cache is allocated normally.

* An __init data area is used for the head array.

* 3) Create the remaining kmalloc caches, with minimally sized

* head arrays.

* 4) Replace the __init data head arrays for cache_cache and the first

* kmalloc cache with kmalloc allocated arrays.

* 5) Replace the __init data for kmem_list3 for cache_cache and

* the other cache's with kmalloc allocated memory.

* 6) Resize the head arrays of the kmalloc caches to their final sizes.

*/

node = numa_node_id();

/* 1) create the cache_cache */

/* 第一步,创建struct kmem_cache所在的cache,由全局变量cache_cache指向

,这里只是初始化数据结构

,并未真正创建这些对象,要待分配时才创建。*/

/* 全局变量cache_chain是内核slab cache链表的表头*/

INIT_LIST_HEAD(&cache_chain);

/* 将cache_cache加入到slab cache链表*/

list_add(&cache_cache.next, &cache_chain);

/* 设置cache着色基本单位为cache line的大小:32字节*/

cache_cache.colour_off = cache_line_size();

/* 初始化cache_cache的local cache,同样这里也不能使用kmalloc

,需要使用静态分配的全局变量initarray_cache */

cache_cache.array[smp_processor_id()] = &initarray_cache.cache;

/* 初始化slab链表,用全局变量*/

cache_cache.nodelists[node] = &initkmem_list3[CACHE_CACHE + node];

/*

* struct kmem_cache size depends on nr_node_ids, which

* can be less than MAX_NUMNODES.

*/

/* buffer_size保存slab中对象的大小,这里是计算struct kmem_cache的大小

,nodelists是最后一个成员

,nr_node_ids保存内存节点个数,UMA为1

,所以nodelists偏移加上1个struct kmem_list3 的大小即为struct kmem_cache的大小*/

cache_cache.buffer_size = offsetof(struct kmem_cache, nodelists) +

nr_node_ids * sizeof(struct kmem_list3 *);

#if DEBUG

cache_cache.obj_size = cache_cache.buffer_size;

#endif

/* 将对象大小与cache line大小对齐*/

cache_cache.buffer_size = ALIGN(cache_cache.buffer_size,

cache_line_size());

/* 计算对象大小的倒数,用于计算对象在slab中的索引*/

cache_cache.reciprocal_buffer_size =

reciprocal_value(cache_cache.buffer_size);

for (order = 0; order < MAX_ORDER; order++) {

/* 计算cache_cache中的对象数目*/

cache_estimate(order, cache_cache.buffer_size,

cache_line_size(), 0, &left_over, &cache_cache.num);

/* num不为0意味着创建struct kmem_cache对象成功,退出*/

if (cache_cache.num)

break;

}

BUG_ON(!cache_cache.num);

/* gfporder表示本slab包含2^gfporder个页面*/

cache_cache.gfporder = order;

/* 着色区的大小,以colour_off为单位*/

cache_cache.colour = left_over / cache_cache.colour_off;

/* slab管理对象的大小*/

cache_cache.slab_size = ALIGN(cache_cache.num * sizeof(kmem_bufctl_t) +

sizeof(struct slab), cache_line_size());

/* 2+3) create the kmalloc caches */

/* 第二步,创建kmalloc所用的general cache

,kmalloc所用的对象按大小分级

,malloc_sizes保存大小,cache_names保存cache名*/

sizes = malloc_sizes;

names = cache_names;

/*

* Initialize the caches that provide memory for the array cache and the

* kmem_list3 structures first. Without this, further allocations will

* bug.

*/

/* 首先创建struct array_cache和struct kmem_list3所用的general cache

,它们是后续初始化动作的基础*/

/* INDEX_AC是计算local cache所用的struct arraycache_init对象在kmalloc size中的索引

,即属于哪一级别大小的general cache

,创建此大小级别的cache为local cache所用*/

sizes[INDEX_AC].cs_cachep = kmem_cache_create(names[INDEX_AC].name,

sizes[INDEX_AC].cs_size,

ARCH_KMALLOC_MINALIGN,

ARCH_KMALLOC_FLAGS|SLAB_PANIC,

NULL);

/* 如果struct kmem_list3和struct arraycache_init对应的kmalloc size索引不同

,即大小属于不同的级别

,则创建struct kmem_list3所用的cache,否则共用一个cache */

if (INDEX_AC != INDEX_L3) {

sizes[INDEX_L3].cs_cachep =

kmem_cache_create(names[INDEX_L3].name,

sizes[INDEX_L3].cs_size,

ARCH_KMALLOC_MINALIGN,

ARCH_KMALLOC_FLAGS|SLAB_PANIC,

NULL);

}

/* 创建完上述两个general cache后,slab early init阶段结束,在此之前

,不允许创建外置式slab */

slab_early_init = 0;

/* 循环创建kmalloc各级别的general cache */

while (sizes->cs_size != ULONG_MAX) {

/*

* For performance, all the general caches are L1 aligned.

* This should be particularly beneficial on SMP boxes, as it

* eliminates "false sharing".

* Note for systems short on memory removing the alignment will

* allow tighter packing of the smaller caches.

*/

/* 某级别的kmalloc cache还未创建,创建之,struct kmem_list3和

struct arraycache_init对应的cache已经创建过了*/

if (!sizes->cs_cachep) {

sizes->cs_cachep = kmem_cache_create(names->name,

sizes->cs_size,

ARCH_KMALLOC_MINALIGN,

ARCH_KMALLOC_FLAGS|SLAB_PANIC,

NULL);

}

#ifdef CONFIG_ZONE_DMA

sizes->cs_dmacachep = kmem_cache_create(

names->name_dma,

sizes->cs_size,

ARCH_KMALLOC_MINALIGN,

ARCH_KMALLOC_FLAGS|SLAB_CACHE_DMA|

SLAB_PANIC,

NULL);

#endif

sizes++;

names++;

}

/* 至此,kmalloc general cache已经创建完毕,可以拿来使用了*/

/* 4) Replace the bootstrap head arrays */

/* 第四步,用kmalloc对象替换静态分配的全局变量

。到目前为止一共使用了两个全局local cache

,一个是cache_cache的local cache指向initarray_cache.cache

,另一个是malloc_sizes[INDEX_AC].cs_cachep的local cache指向initarray_generic.cache

,参见setup_cpu_cache函数。这里替换它们。*/

{

struct array_cache *ptr;

/* 申请cache_cache所用local cache的空间*/

ptr = kmalloc(sizeof(struct arraycache_init), GFP_NOWAIT);

BUG_ON(cpu_cache_get(&cache_cache) != &initarray_cache.cache);

/* 复制原cache_cache的local cache,即initarray_cache,到新的位置*/

memcpy(ptr, cpu_cache_get(&cache_cache),

sizeof(struct arraycache_init));

/*

* Do not assume that spinlocks can be initialized via memcpy:

*/

spin_lock_init(&ptr->lock);

/* cache_cache的local cache指向新的位置*/

cache_cache.array[smp_processor_id()] = ptr;

/* 申请malloc_sizes[INDEX_AC].cs_cachep所用local cache的空间*/

ptr = kmalloc(sizeof(struct arraycache_init), GFP_NOWAIT);

BUG_ON(cpu_cache_get(malloc_sizes[INDEX_AC].cs_cachep)

!= &initarray_generic.cache);

/* 复制原local cache到新分配的位置,注意此时local cache的大小是固定的*/

memcpy(ptr, cpu_cache_get(malloc_sizes[INDEX_AC].cs_cachep),

sizeof(struct arraycache_init));

/*

* Do not assume that spinlocks can be initialized via memcpy:

*/

spin_lock_init(&ptr->lock);

malloc_sizes[INDEX_AC].cs_cachep->array[smp_processor_id()] =

ptr;

}

/* 5) Replace the bootstrap kmem_list3's */

/* 第五步,与第四步类似,用kmalloc的空间替换静态分配的slab三链*/

{

int nid;

/* UMA只有一个节点*/

for_each_online_node(nid) {

/* 复制struct kmem_cache的slab三链*/

init_list(&cache_cache, &initkmem_list3[CACHE_CACHE + nid], nid);

/* 复制struct arraycache_init的slab三链*/

init_list(malloc_sizes[INDEX_AC].cs_cachep,

&initkmem_list3[SIZE_AC + nid], nid);

/* 复制struct kmem_list3的slab三链*/

if (INDEX_AC != INDEX_L3) {

init_list(malloc_sizes[INDEX_L3].cs_cachep,

&initkmem_list3[SIZE_L3 + nid], nid);

}

}

}

/* 更新slab系统初始化进度*/

g_cpucache_up = EARLY;

}

辅助操作

1,slab三链初始化

www.zhishiwu.com

static void kmem_list3_init(struct kmem_list3 *parent)

{

INIT_LIST_HEAD(&parent->slabs_full);

INIT_LIST_HEAD(&parent->slabs_partial);

INIT_LIST_HEAD(&parent->slabs_free);

parent->shared = NULL;

parent->alien = NULL;

parent->colour_next = 0;

spin_lock_init(&parent->list_lock);

parent->free_objects = 0;

parent->free_touched = 0;

}

2,slab三链静态数据初始化

www.zhishiwu.com

/*设置cache的slab三链指向静态分配的全局变量*/

static void __init set_up_list3s(struct kmem_cache *cachep, int index)

{

int node;

/* UMA只有一个节点*/

for_each_online_node(node) {

/* 全局变量initkmem_list3是初始化阶段使用的slab三链*/

cachep->nodelists[node] = &initkmem_list3[index + node];

/* 设置回收时间*/

cachep->nodelists[node]->next_reap = jiffies +

REAPTIMEOUT_LIST3 +

((unsigned long)cachep) % REAPTIMEOUT_LIST3;

}

}

3,计算每个slab中对象的数目

www.zhishiwu.com

/*

* Calculate the number of objects and left-over bytes for a given buffer size.

*/

/*计算每个slab中对象的数目。*/

/*

1) gfporder:slab由2gfporder个页面组成。

2) buffer_size:对象的大小。

3) align:对象的对齐方式。

4) flags:内置式slab还是外置式slab。

5) left_over:slab中浪费空间的大小。

6) num:slab中的对象数目。

*/

static void cache_estimate(unsigned long gfporder, size_t buffer_size,

size_t align, int flags, size_t *left_over,

unsigned int *num)

{

int nr_objs;

size_t mgmt_size;

/* slab大小为1<<order个页面*/

size_t slab_size = PAGE_SIZE << gfporder;

/*

* The slab management structure can be either off the slab or

* on it. For the latter case, the memory allocated for a

* slab is used for:

*

* - The struct slab

* - One kmem_bufctl_t for each object

* - Padding to respect alignment of @align

* - @buffer_size bytes for each object

*

* If the slab management structure is off the slab, then the

* alignment will already be calculated into the size. Because

* the slabs are all pages aligned, the objects will be at the

* correct alignment when allocated.

*/

if (flags & CFLGS_OFF_SLAB) {

/* 外置式slab */

mgmt_size = 0;

/* slab页面不含slab管理对象,全部用来存储slab对象*/

nr_objs = slab_size / buffer_size;

/* 对象数不能超过上限*/

if (nr_objs > SLAB_LIMIT)

nr_objs = SLAB_LIMIT;

} else {

/*

* Ignore padding for the initial guess. The padding

* is at most @align-1 bytes, and @buffer_size is at

* least @align. In the worst case, this result will

* be one greater than the number of objects that fit

* into the memory allocation when taking the padding

* into account.

*//* 内置式slab,slab管理对象与slab对象在一起

,此时slab页面中包含:一个struct slab对象,一个kmem_bufctl_t数组,slab对象。

kmem_bufctl_t数组大小与slab对象数目相同*/

nr_objs = (slab_size - sizeof(struct slab)) /

(buffer_size + sizeof(kmem_bufctl_t));

/*

* This calculated number will be either the right

* amount, or one greater than what we want.

*//* 计算cache line对齐后的大小,如果超出了slab总的大小,则对象数减一*/

if (slab_mgmt_size(nr_objs, align) + nr_objs*buffer_size

> slab_size)

nr_objs--;

if (nr_objs > SLAB_LIMIT)

nr_objs = SLAB_LIMIT;

/* 计算cache line对齐后slab管理对象的大小*/

mgmt_size = slab_mgmt_size(nr_objs, align);

}

*num = nr_objs;/* 保存slab对象数目*/

/* 计算浪费空间的大小*/

*left_over = slab_size - nr_objs*buffer_size - mgmt_size;

}

辅助数据结构与变量

Linux内核中将所有的通用cache以不同的大小存放在数组中,以方便查找。其中malloc_sizes[]数组为cache_sizes类型的数组,存放各个cache的大小;cache_names[]数组为cache_names结构类型数组,存放各个cache大小的名称;malloc_sizes[]数组和cache_names[]数组下标对应,也就是说cache_names[i]名称的cache对应的大小为malloc_sizes[i]。

www.zhishiwu.com

/* Size description struct for general caches. */

struct cache_sizes {

size_t cs_size;

struct kmem_cache *cs_cachep;

#ifdef CONFIG_ZONE_DMA

struct kmem_cache *cs_dmacachep;

#endif

};

/*

* These are the default caches for kmalloc. Custom caches can have other sizes.

*/

struct cache_sizes malloc_sizes[] = {

#define CACHE(x) { .cs_size = (x) },

#include <linux/kmalloc_sizes.h>

CACHE(ULONG_MAX)

#undef CACHE

};

www.zhishiwu.com

/* Must match cache_sizes above. Out of line to keep cache footprint low. */

struct cache_names {

char *name;

char *name_dma;

};

static struct cache_names __initdata cache_names[] = {

#define CACHE(x) { .name = "size-" #x, .name_dma = "size-" #x "(DMA)" },

#include <linux/kmalloc_sizes.h>

{NULL,}

#undef CACHE

};

二、内核启动末期初始化

1,根据对象大小计算local cache中对象数目上限;

2,借助数据结构ccupdate_struct操作cpu本地cache。为每个在线cpu分配cpu本地cache;

3,用新分配的cpu本地cache替换原有的cache;

4,更新slab三链以及cpu本地共享cache。

第二阶段代码分析

Start_kernel()->kmem_cache_init_late()

www.zhishiwu.com

/*Slab系统初始化分两个部分,先初始化一些基本的,待系统初始化工作进行的差不多时,再配置一些特殊功能。*/

void __init kmem_cache_init_late(void)

{

struct kmem_cache *cachep;

/* 初始化阶段local cache的大小是固定的,要根据对象大小重新计算*/

/* 6) resize the head arrays to their final sizes */

mutex_lock(&cache_chain_mutex);

list_for_each_entry(cachep, &cache_chain, next)

if (enable_cpucache(cachep, GFP_NOWAIT))

BUG();

mutex_unlock(&cache_chain_mutex);

/* Done! */

/* 大功告成,general cache终于全部建立起来了*/

g_cpucache_up = FULL;

/* Annotate slab for lockdep -- annotate the malloc caches */

init_lock_keys();

/*

* Register a cpu startup notifier callback that initializes

* cpu_cache_get for all new cpus

*/

/* 注册cpu up回调函数,cpu up时配置local cache */

register_cpu_notifier(&cpucache_notifier);

/*

* The reap timers are started later, with a module init call: That part

* of the kernel is not yet operational.

*/

}

www.zhishiwu.com

/* Called with cache_chain_mutex held always */

/*local cache 初始化*/

static int enable_cpucache(struct kmem_cache *cachep, gfp_t gfp)

{

int err;

int limit, shared;

/*

* The head array serves three purposes:

* - create a LIFO ordering, i.e. return objects that are cache-warm

* - reduce the number of spinlock operations.

* - reduce the number of linked list operations on the slab and

* bufctl chains: array operations are cheaper.

* The numbers are guessed, we should auto-tune as described by

* Bonwick.

*/ /* 根据对象大小计算local cache中对象数目上限*/

if (cachep->buffer_size > 131072)

limit = 1;

else if (cachep->buffer_size > PAGE_SIZE)

limit = 8;

else if (cachep->buffer_size > 1024)

limit = 24;

else if (cachep->buffer_size > 256)

limit = 54;

else

limit = 120;

/*

* CPU bound tasks (e.g. network routing) can exhibit cpu bound

* allocation behaviour: Most allocs on one cpu, most free operations

* on another cpu. For these cases, an efficient object passing between

* cpus is necessary. This is provided by a shared array. The array

* replaces Bonwick's magazine layer.

* On uniprocessor, it's functionally equivalent (but less efficient)

* to a larger limit. Thus disabled by default.

*/

shared = 0;

/* 多核系统,设置shared local cache中对象数目*/

if (cachep->buffer_size <= PAGE_SIZE && num_possible_cpus() > 1)

shared = 8;

#if DEBUG

/*

* With debugging enabled, large batchcount lead to excessively long

* periods with disabled local interrupts. Limit the batchcount

*/

if (limit > 32)

limit = 32;

#endif

/* 配置local cache */

err = do_tune_cpucache(cachep, limit, (limit + 1) / 2, shared, gfp);

if (err)

printk(KERN_ERR "enable_cpucache failed for %s, error %d./n",

cachep->name, -err);

return err;

}

www.zhishiwu.com

/* Always called with the cache_chain_mutex held */

/*配置local cache、shared local cache和slab三链*/

static int do_tune_cpucache(struct kmem_cache *cachep, int limit,

int batchcount, int shared, gfp_t gfp)

{

struct ccupdate_struct *new;

int i;

new = kzalloc(sizeof(*new), gfp);

if (!new)

return -ENOMEM;

/* 为每个cpu分配新的struct array_cache对象*/

for_each_online_cpu(i) {

new->new[i] = alloc_arraycache(cpu_to_node(i), limit,

batchcount, gfp);

if (!new->new[i]) {

for (i--; i >= 0; i--)

kfree(new->new[i]);

kfree(new);

return -ENOMEM;

}

}

new->cachep = cachep;

/* 用新的struct array_cache对象替换旧的struct array_cache对象

,在支持cpu热插拔的系统上,离线cpu可能没有释放local cache

,使用的仍是旧local cache,参见__kmem_cache_destroy函数

。虽然cpu up时要重新配置local cache,也无济于事。考虑下面的情景

:共有Cpu A和Cpu B,Cpu B down后,destroy Cache X,由于此时Cpu B是down状态

,所以Cache X中Cpu B的local cache未释放,过一段时间Cpu B又up了

,更新cache_chain 链中所有cache的local cache,但此时Cache X对象已经释放回

cache_cache中了,其Cpu B local cache并未被更新。又过了一段时间

,系统需要创建新的cache,将Cache X对象分配出去,其Cpu B仍然是旧的

local cache,需要进行更新。

*/

on_each_cpu(do_ccupdate_local, (void *)new, 1);

check_irq_on();

cachep->batchcount = batchcount;

cachep->limit = limit;

cachep->shared = shared;

/* 释放旧的local cache */

for_each_online_cpu(i) {

struct array_cache *ccold = new->new[i];

if (!ccold)

continue;

spin_lock_irq(&cachep->nodelists[cpu_to_node(i)]->list_lock);

/* 释放旧local cache中的对象*/

free_block(cachep, ccold->entry, ccold->avail, cpu_to_node(i));

spin_unlock_irq(&cachep->nodelists[cpu_to_node(i)]->list_lock);

/* 释放旧的struct array_cache对象*/

kfree(ccold);

}

kfree(new);

/* 初始化shared local cache 和slab三链*/

return alloc_kmemlist(cachep, gfp);

}

更新本地cache

www.zhishiwu.com

/*更新每个cpu的struct array_cache对象*/

static void do_ccupdate_local(void *info)

{

struct ccupdate_struct *new = info;

struct array_cache *old;

check_irq_off();

old = cpu_cache_get(new->cachep);

/* 指向新的struct array_cache对象*/

new->cachep->array[smp_processor_id()] = new->new[smp_processor_id()];

/* 保存旧的struct array_cache对象*/

new->new[smp_processor_id()] = old;

}

www.zhishiwu.com

/*初始化shared local cache和slab三链,初始化完成后,slab三链中没有任何slab*/

static int alloc_kmemlist(struct kmem_cache *cachep, gfp_t gfp)

{

int node;

struct kmem_list3 *l3;

struct array_cache *new_shared;

struct array_cache **new_alien = NULL;

for_each_online_node(node) {

/* NUMA相关*/

if (use_alien_caches) {

new_alien = alloc_alien_cache(node, cachep->limit, gfp);

if (!new_alien)

goto fail;

}

new_shared = NULL;

if (cachep->shared) {

/* 分配shared local cache */

new_shared = alloc_arraycache(node,

cachep->shared*cachep->batchcount,

0xbaadf00d, gfp);

if (!new_shared) {

free_alien_cache(new_alien);

goto fail;

}

}

/* 获得旧的slab三链*/

l3 = cachep->nodelists[node];

if (l3) {

/* 就slab三链指针不为空,需要先释放旧的资源*/

struct array_cache *shared = l3->shared;

spin_lock_irq(&l3->list_lock);

/* 释放旧的shared local cache中的对象*/

if (shared)

free_block(cachep, shared->entry,

shared->avail, node);

/* 指向新的shared local cache */

l3->shared = new_shared;

if (!l3->alien) {

l3->alien = new_alien;

new_alien = NULL;

}/* 计算cache中空闲对象的上限*/

l3->free_limit = (1 + nr_cpus_node(node)) *

cachep->batchcount + cachep->num;

spin_unlock_irq(&l3->list_lock);

/* 释放旧shared local cache的struct array_cache对象*/

kfree(shared);

free_alien_cache(new_alien);

continue;/*访问下一个节点*/

}

/* 如果没有旧的l3,分配新的slab三链*/

l3 = kmalloc_node(sizeof(struct kmem_list3), gfp, node);

if (!l3) {

free_alien_cache(new_alien);

kfree(new_shared);

goto fail;

}

/* 初始化slab三链*/

kmem_list3_init(l3);

l3->next_reap = jiffies + REAPTIMEOUT_LIST3 +

((unsigned long)cachep) % REAPTIMEOUT_LIST3;

l3->shared = new_shared;

l3->alien = new_alien;

l3->free_limit = (1 + nr_cpus_node(node)) *

cachep->batchcount + cachep->num;

cachep->nodelists[node] = l3;

}

return 0;

fail:

if (!cachep->next.next) {

/* Cache is not active yet. Roll back what we did */

node--;

while (node >= 0) {

if (cachep->nodelists[node]) {

l3 = cachep->nodelists[node];

kfree(l3->shared);

free_alien_cache(l3->alien);

kfree(l3);

cachep->nodelists[node] = NULL;

}

node--;

}

}

return -ENOMEM;

}

看一个辅助函数

/*分配struct array_cache对象。*/

static struct array_cache *alloc_arraycache(int node, int entries,

int batchcount, gfp_t gfp)

{

/* struct array_cache后面紧接着的是entry数组,合在一起申请内存*/

int memsize = sizeof(void *) * entries + sizeof(struct array_cache);

struct array_cache *nc = NULL;

/* 分配一个local cache对象,kmalloc从general cache中分配*/

nc = kmalloc_node(memsize, gfp, node);

/*

* The array_cache structures contain pointers to free object.

* However, when such objects are allocated or transfered to another

* cache the pointers are not cleared and they could be counted as

* valid references during a kmemleak scan. Therefore, kmemleak must

* not scan such objects.

*/

kmemleak_no_scan(nc);

/* 初始化local cache */

if (nc) {

nc->avail = 0;

nc->limit = entries;

nc->batchcount = batchcount;

nc->touched = 0;

spin_lock_init(&nc->lock);

}

return nc;

}

源代码中涉及了slab的分配、释放等操作在后面分析中陆续总结。slab相关数据结构、工作机制以及整体框架在分析完了slab的创建、释放工作后再做总结,这样可能会对slab机制有更好的了解。当然,从代码中看运行机制会更有说服了,也是一种习惯

摘自 bullbat的专栏

(免责声明:文章内容如涉及作品内容、版权和其它问题,请及时与我们联系,我们将在第一时间删除内容,文章内容仅供参考)

相关知识

-

linux一键安装web环境全攻略 在linux系统中怎么一键安装web环境方法

-

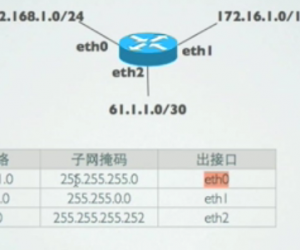

Linux网络基本网络配置方法介绍 如何配置Linux系统的网络方法

-

Linux下DNS服务器搭建详解 Linux下搭建DNS服务器和配置文件

-

对Linux进行详细的性能监控的方法 Linux 系统性能监控命令详解

-

linux系统root密码忘了怎么办 linux忘记root密码后找回密码的方法

-

Linux基本命令有哪些 Linux系统常用操作命令有哪些

-

Linux必学的网络操作命令 linux网络操作相关命令汇总

-

linux系统从入侵到提权的详细过程 linux入侵提权服务器方法技巧

-

linux系统怎么用命令切换用户登录 Linux切换用户的命令是什么

-

在linux中添加普通新用户登录 如何在Linux中添加一个新的用户

软件推荐

更多 >-

1

专为国人订制!Linux Deepin新版发布

专为国人订制!Linux Deepin新版发布2012-07-10

-

2

CentOS 6.3安装(详细图解教程)

-

3

Linux怎么查看网卡驱动?Linux下查看网卡的驱动程序

-

4

centos修改主机名命令

-

5

Ubuntu或UbuntuKyKin14.04Unity桌面风格与Gnome桌面风格的切换

-

6

FEDORA 17中设置TIGERVNC远程访问

-

7

StartOS 5.0相关介绍,新型的Linux系统!

-

8

解决vSphere Client登录linux版vCenter失败

-

9

LINUX最新提权 Exploits Linux Kernel <= 2.6.37

-

10

nginx在网站中的7层转发功能